Introduction

Welcome to the BLVM documentation.

BLVM (Bitcoin Low-Level Virtual Machine) implements Bitcoin consensus from the Orange Paper, provides protocol abstraction for multiple Bitcoin variants, a reference full node with P2P networking, a developer SDK, and cryptographic governance for transparent development.

What is BLVM?

BLVM is compiler-like infrastructure for Bitcoin implementations. There is a mathematical specification (the Orange Paper, treated as an intermediate representation / IR) and an implementation. The implementation is validated against the spec using BLVM Specification Lock (formal verification with Z3)—it is not generated or transformed from the IR. Alternative implementations can target the same Orange Paper and tooling.

Stack:

- Orange Paper – Mathematical specification (IR)

- blvm-spec-lock – Links code to spec; validates implementation against the IR

- blvm-consensus – Consensus implementation with formal verification

- blvm-protocol – Protocol abstraction (mainnet, testnet, regtest)

- blvm-node – Full node (storage, networking, RPC, modules)

- blvm-sdk – Developer toolkit and module composition

- Governance – Cryptographic governance enforcement

Why “LVM”? Like LLVM’s infrastructure for compilers, BLVM provides shared infrastructure for Bitcoin implementations—with the spec as the reference and the implementation validated against it, not generated from it.

Documentation Structure

- Getting Started – Installation, quick start, first node

- Architecture – System design, module system, events

- Layers – Consensus, protocol, node (each with overview and detailed pages)

- Developer SDK – Module development, API reference, examples

- Governance – Model, configuration, procedures

- Reference – Specifications, API Index, glossary

Documentation is maintained in source repositories alongside code and is aggregated at docs.thebitcoincommons.org.

Getting Help

Report bugs or request features via GitHub Issues, ask questions in GitHub Discussions, or report security issues to security@thebitcoincommons.org.

License

This documentation is licensed under the MIT License, same as the BLVM codebase.

Installation

This guide covers installing BLVM from pre-built binaries available on GitHub releases.

Prerequisites

Pre-built binaries are available for Linux, macOS, and Windows on common platforms. No Rust installation required - binaries are pre-compiled and ready to use.

Installing blvm-node

The reference node is the main entry point for running a BLVM node.

Quick Start

-

Download the latest release from GitHub Releases

-

Extract the archive for your platform:

# Linux tar -xzf blvm-*-linux-x86_64.tar.gz # macOS tar -xzf blvm-*-macos-x86_64.tar.gz # Windows # Extract the .zip file using your preferred tool -

Move the binary to your PATH (optional but recommended):

# Linux/macOS sudo mv blvm /usr/local/bin/ # Or add to your local bin directory mkdir -p ~/.local/bin mv blvm ~/.local/bin/ export PATH="$HOME/.local/bin:$PATH" # Add to ~/.bashrc or ~/.zshrc -

Verify installation:

blvm --version

Release Variants

Releases include two variants:

Base Variant (blvm-{version}-{platform}.tar.gz)

Stable, minimal release with core Bitcoin node functionality, production optimizations, standard storage backends, and process sandboxing. Use for production deployments prioritizing stability.

Experimental Variant (blvm-experimental-{version}-{platform}.tar.gz)

Full-featured build with experimental features: UTXO commitments, BIP119 CTV, Dandelion++, BIP158, Stratum V2, and enhanced signature operations counting. See Protocol Specifications for details.

Use for development, testing, or when experimental capabilities are required.

Installing blvm-sdk Tools

The SDK tools (blvm-keygen, blvm-sign, blvm-verify) are included in the blvm-node release archives.

After extracting the release archive, you’ll find:

blvm- Bitcoin reference nodeblvm-keygen- Generate governance keypairsblvm-sign- Sign governance messagesblvm-verify- Verify signatures and multisig thresholds

All tools are in the same directory. Move them to your PATH as described above.

Platform-Specific Notes

Linux

- x86_64: Standard 64-bit Linux

- ARM64: For ARM-based systems (Raspberry Pi, AWS Graviton, etc.)

- glibc 2.31+: Required for Linux binaries

macOS

- x86_64: Intel Macs

- ARM64: Apple Silicon (M1/M2/M3)

- macOS 11.0+: Required for macOS binaries

Windows

- x86_64: 64-bit Windows

- Extract the

.zipfile and runblvm.exefrom the extracted directory - Add the directory to your PATH for command-line access

Verifying Installation

After installation, verify everything works:

# Check blvm-node version

blvm --version

# Check SDK tools

blvm-keygen --help

blvm-sign --help

blvm-verify --help

Building from Source (Advanced)

Building from source is primarily for development. Crates in this stack declare rust-version 1.82 or 1.83 in their Cargo.toml (for example blvm-consensus and blvm-spec-lock use 1.83; blvm-node and blvm-protocol use 1.82). Use Rust 1.83 or later unless you are working only in a crate with a lower MSRV. Clone the blvm repository and follow the build instructions in its README.

Next Steps

- See Quick Start for running your first node

- See Node Configuration for detailed setup options

See Also

- Quick Start - Run your first node

- First Node Setup - Complete setup guide

- Node Configuration - Configuration options

- Node Overview - Node features and capabilities

- Release Process - How releases are created

- GitHub Releases - Download releases

Quick Start

Get up and running with BLVM in minutes.

Running Your First Node

After installing the binary, you can start a node:

Regtest Mode (Recommended for Development)

Regtest mode is safe for development - it creates an isolated network:

blvm

Or explicitly:

blvm --network regtest

Starts a node in regtest mode (default), creating an isolated network with instant block generation for testing and development.

Testnet Mode

Connect to Bitcoin testnet:

blvm --network testnet

Mainnet Mode

⚠️ Warning: Only use mainnet if you understand the risks.

blvm --network mainnet

Basic Node Operations

Checking Node Status

Once the node is running, check its status via RPC:

# Mainnet uses port 8332, testnet/regtest use 18332

curl -X POST http://localhost:8332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getblockchaininfo", "params": [], "id": 1}'

Example Response:

{

"jsonrpc": "2.0",

"result": {

"chain": "regtest",

"blocks": 100,

"headers": 100,

"bestblockhash": "0f9188f13cb7b2c71f2a335e3a4fc328bf5beb436012afca590b1a11466e2206",

"difficulty": 4.656542373906925e-10,

"mediantime": 1231006505,

"verificationprogress": 1.0,

"chainwork": "0000000000000000000000000000000000000000000000000000000000000064",

"pruned": false,

"initialblockdownload": false

},

"id": 1

}

Getting Peer Information

# Mainnet uses port 8332, testnet/regtest use 18332

curl -X POST http://localhost:8332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getpeerinfo", "params": [], "id": 2}'

Getting Mempool Information

# Mainnet uses port 8332, testnet/regtest use 18332

curl -X POST http://localhost:8332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getmempoolinfo", "params": [], "id": 3}'

Verifying Installation

blvm --version # Verify installation

blvm --help # View available options

The node connects to the P2P network, syncs blockchain state, accepts RPC commands on port 8332 (mainnet default) or 18332 (testnet/regtest), and can mine blocks if configured.

Using the SDK

Generate a Governance Keypair

blvm-keygen --output my-key.key

Sign a Message

blvm-sign release \

--version v1.0.0 \

--commit abc123 \

--key my-key.key \

--output signature.txt

Verify Signatures

blvm-verify release \

--version v1.0.0 \

--commit abc123 \

--signatures sig1.txt,sig2.txt,sig3.txt \

--threshold 3-of-5 \

--pubkeys keys.json

Next Steps

- First Node Setup - Detailed configuration guide

- Node Configuration - Full configuration options

- RPC API Reference - Interact with your node

See Also

- Installation - Installing BLVM

- First Node Setup - Complete setup guide

- Node Configuration - Configuration options

- Node Operations - Managing your node

- RPC API Reference - Complete RPC API

- Troubleshooting - Common issues

First Node Setup (regtest)

This guide is regtest-only from top to bottom — local chain, no public seeds, default RPC 127.0.0.1:18332. You are not syncing mainnet here.

Optional later: Configuration examples include testnet and mainnet. Ignore those until you intentionally switch networks.

Regtest: step-by-step

Step 1: Create configuration directory

mkdir -p ~/.config/blvm

cd ~/.config/blvm

Step 2: Create blvm.toml (regtest)

Create ~/.config/blvm/blvm.toml:

# --- regtest (local dev) ---

transport_preference = "tcponly"

# BLVM P2P listen — use a port other than 18444 if another local regtest peer already binds 18444 (common default).

listen_addr = "127.0.0.1:18445"

protocol_version = "Regtest"

[storage]

data_dir = "~/.local/share/blvm"

database_backend = "auto"

[logging]

level = "info"

transport_preferenceis required. If TOML parse fails, the node never appliesprotocol_version = "Regtest"— fix the file before continuing.- P2P port:

18444is a widely used default regtest listen port. Give BLVM a different port (e.g.18445) when two nodes share a host; pointpersistent_peersat the other peer’s address when you want blocks from it. - RPC is not in this file: use defaults (

127.0.0.1:18332) or--rpc-addr/BLVM_RPC_ADDR.

Step 2b: Validate the file

From any directory, using the same blvm binary you will run:

/path/to/blvm config validate --path ~/.config/blvm/blvm.toml

You should see Configuration file is valid. If not, fix the TOML; do not start sync until this passes.

Step 3: Start the regtest node

Same binary as validate. Clear mainnet IBD env vars if your shell still has them from other work:

env -u BLVM_ASSUME_VALID_HEIGHT -u BLVM_ASSUMEVALID \

/path/to/blvm --config ~/.config/blvm/blvm.toml --verbose

Confirm regtest in the first log lines:

Configuration loaded successfully from fileNetwork: RegtestData directory:matches your[storage].data_dir

No config file (still regtest):

env -u BLVM_ASSUME_VALID_HEIGHT -u BLVM_ASSUMEVALID \

/path/to/blvm -n regtest -d ~/.local/share/blvm --verbose

Example lines:

[INFO] blvm: Network: Regtest

[INFO] blvm: Data directory: /home/you/.local/share/blvm

[INFO] blvm_node::rpc: Starting TCP RPC server on 127.0.0.1:18332

Peers on regtest: there are no public DNS seeds. 0 peers and “skipping DNS seed discovery” are normal. To sync blocks you need another regtest peer (e.g. a second blvm, or any regtest full node) and persistent_peers / a local harness — that is still regtest, not mainnet.

Step 4: Verify regtest RPC

In another terminal (regtest RPC port 18332):

curl -X POST http://localhost:18332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getblockchaininfo", "params": [], "id": 1}'

Expected Response:

{

"jsonrpc": "2.0",

"result": {

"chain": "regtest",

"blocks": 0,

"headers": 0,

"bestblockhash": "...",

"difficulty": 4.656542373906925e-10,

"mediantime": 1231006505,

"verificationprogress": 1.0,

"chainwork": "0000000000000000000000000000000000000000000000000000000000000001",

"pruned": false,

"initialblockdownload": false

},

"id": 1

}

Configuration examples (other networks)

The steps above are regtest. Below are copy-paste starting points if you leave regtest.

Development node (regtest, extended)

transport_preference = "tcponly"

listen_addr = "127.0.0.1:18445"

protocol_version = "Regtest"

[storage]

data_dir = "~/.local/share/blvm"

database_backend = "auto"

[rbf]

mode = "standard" # Standard RBF for development

[mempool]

max_mempool_mb = 100

min_relay_fee_rate = 1

Start with: blvm -n regtest -d ~/.local/share/blvm (RPC defaults to 127.0.0.1:18332).

Testnet node

transport_preference = "tcponly"

listen_addr = "127.0.0.1:18333"

protocol_version = "Testnet3"

[storage]

data_dir = "~/.local/share/blvm-testnet"

database_backend = "redb"

[rbf]

mode = "standard"

[mempool]

max_mempool_mb = 300

min_relay_fee_rate = 1

eviction_strategy = "lowest_fee_rate"

Start with: blvm -n testnet -d ~/.local/share/blvm-testnet -r 127.0.0.1:18332

Production mainnet node

transport_preference = "tcponly"

listen_addr = "0.0.0.0:8333"

protocol_version = "BitcoinV1"

[storage]

data_dir = "/var/lib/blvm"

database_backend = "redb"

[storage.cache]

# Example values; canonical defaults in [Configuration Reference](../reference/configuration-reference.md)

block_cache_mb = 200

utxo_cache_mb = 100

header_cache_mb = 20

[rbf]

mode = "standard"

[mempool]

max_mempool_mb = 300

max_mempool_txs = 100000

min_relay_fee_rate = 1

eviction_strategy = "lowest_fee_rate"

max_ancestor_count = 25

max_descendant_count = 25

Start with: blvm -n mainnet -d /var/lib/blvm -r 127.0.0.1:8332. Use [rpc_auth] and RPC_AUTH_TOKENS for production.

See Node Configuration for complete configuration options.

Storage

The node stores blockchain data (blocks, UTXO set, chain state, and indexes) in the configured data directory. See Storage Backends for configuration options.

Peers and sync (regtest vs public networks)

- Regtest: No wide-area peer discovery. You only get blocks from peers you configure (

persistent_peers, local second node, or another regtestbitcoind). Staying at height 0 with 0 peers is expected until you add that. - Mainnet / testnet: DNS seeds and addr relay apply; IBD pulls from the public network.

Regtest: how you get past genesis (height > 0)

You need a peer that already has blocks, or a datadir that already contains those blocks. A lone BLVM on regtest with no peers stays at genesis — that is not “missing a feature,” it is how regtest is defined (no public seeds).

1. Second regtest full node (typical way to create blocks)

Run a reference regtest daemon on default P2P 127.0.0.1:18444, mine some blocks, then run BLVM on a different local P2P port and peer outbound to it. Example using the common bitcoind / bitcoin-cli CLI:

bitcoind -regtest -daemon

bitcoin-cli -regtest createwallet w

ADDR=$(bitcoin-cli -regtest getnewaddress)

bitcoin-cli -regtest generatetoaddress 200 "$ADDR"

BLVM TOML (listen on 18445, peer the regtest seed on 18444; matches local/regtest-two-node-seed-bootstrap.toml):

transport_preference = "tcponly"

listen_addr = "127.0.0.1:18445"

protocol_version = "Regtest"

persistent_peers = ["127.0.0.1:18444"]

[storage]

data_dir = "~/.local/share/blvm-regtest-from-core"

database_backend = "auto"

[ibd]

mode = "parallel"

preferred_peers = ["127.0.0.1:18444"]

[logging]

level = "info"

Start BLVM with that config (or pass --listen-addr 127.0.0.1:18445 if your file is merged with other settings); IBD should pull blocks until it matches the peer’s tip.

2. Two BLVM nodes

Works once the seed already has height > 0 (from (1), or from copying a populated seed data_dir). A follower then syncs from the seed over persistent_peers. If both nodes start from an empty datadir, both stay at genesis — add blocks with (1) first, or reuse a filled datadir.

3. Reuse a populated data directory

Copy/sync the configured [storage].data_dir from a machine that already completed regtest sync for the same network.

4. submitblock on a running BLVM node

On a normal node (RPC server wired with NetworkManager), submitblock checks that the block’s prev_block_hash matches the current tip, then queues the block for the same run-loop processing as P2P-received blocks, so a valid next block can extend your local chain. You still have to produce that block (for example getblocktemplate plus a miner); BLVM does not yet mirror every convenience RPC some reference stacks expose for regtest mining.

If MiningRpc is used without a network manager (some tests or minimal tooling), submitblock remains validation-only and does not advance the chain.

If you use the workspace local harness (BitcoinCommons/local/regtest-two-blvm.sh), regtest-two-node-seed-bootstrap.toml is wired for a regtest seed on :18444 and BLVM P2P on :18445. After the seed has blocks, run ./local/regtest-two-blvm.sh start-seed-bootstrap, then start-follower (or start-both after the seed has height > 0) as documented in the script header.

RPC interface (regtest)

Default listen in this guide: 127.0.0.1:18332.

curl -s -X POST http://127.0.0.1:18332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc":"2.0","method":"getblockchaininfo","params":[],"id":1}'

Mainnet uses port 8332 by default; do not use that for the regtest flow above.

See RPC API Reference for the full API.

See Also

- Node Configuration - Complete configuration options

- Node Operations - Running and managing your node

- RPC API Reference - Complete API documentation

- Troubleshooting - Common issues and solutions

Security Considerations

⚠️ Important: This implementation is designed for pre-production testing and development. Additional hardening is required for production mainnet use. Use regtest or testnet for development, never expose RPC to untrusted networks, configure RPC authentication, and keep software updated.

Troubleshooting

See Troubleshooting for common issues and solutions.

RBF Configuration Example

Complete example of configuring RBF (Replace-By-Fee) for different use cases.

Exchange Node Configuration

For exchanges that need to protect users from unexpected transaction replacements:

[rbf]

mode = "conservative"

min_fee_rate_multiplier = 2.0

min_fee_bump_satoshis = 5000

min_confirmations = 1

max_replacements_per_tx = 3

cooldown_seconds = 300

[mempool]

max_mempool_mb = 500

max_mempool_txs = 200000

min_relay_fee_rate = 2

eviction_strategy = "lowest_fee_rate"

max_ancestor_count = 25

max_descendant_count = 25

persist_mempool = true

Why This Configuration:

- Conservative RBF: Requires 2x fee increase, preventing low-fee replacements

- 1 Confirmation Required: Additional safety check before allowing replacement

- Higher Fee Threshold: 2 sat/vB minimum relay fee filters low-priority transactions

- Mempool Persistence: Survives restarts for better reliability

Mining Pool Configuration

For mining pools that want to maximize fee revenue:

[rbf]

mode = "aggressive"

min_fee_rate_multiplier = 1.05

min_fee_bump_satoshis = 500

allow_package_replacements = true

max_replacements_per_tx = 10

cooldown_seconds = 60

[mempool]

max_mempool_mb = 1000

max_mempool_txs = 500000

min_relay_fee_rate = 1

eviction_strategy = "lowest_fee_rate"

max_ancestor_count = 50

max_descendant_count = 50

Why This Configuration:

- Aggressive RBF: Only 5% fee increase required, maximizing fee opportunities

- Package Replacements: Allows parent+child transaction replacements

- Larger Mempool: 1GB capacity for more transaction opportunities

- Relaxed Ancestor Limits: 50 transactions for larger packages

Standard Node Configuration

For general-purpose nodes using conventional mempool defaults:

[rbf]

mode = "standard"

min_fee_rate_multiplier = 1.1

min_fee_bump_satoshis = 1000

[mempool]

max_mempool_mb = 300

max_mempool_txs = 100000

min_relay_fee_rate = 1

eviction_strategy = "lowest_fee_rate"

max_ancestor_count = 25

max_descendant_count = 25

mempool_expiry_hours = 336

Why This Configuration:

- Standard RBF: BIP125-compliant with 10% fee increase

- Conventional defaults: Matches common mainnet mempool parameters

- Balanced: Good for most use cases

Testing RBF Configuration

Test Transaction Replacement

- Create initial transaction:

# Send transaction with RBF signaling (sequence < 0xffffffff)

bitcoin-cli sendtoaddress <address> 0.001 "" "" true

- Replace with higher fee:

# Create replacement with higher fee

bitcoin-cli bumpfee <txid> --fee_rate 20

- Verify replacement:

# Check mempool

curl -X POST http://localhost:8332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getmempoolentry", "params": ["<new_txid>"], "id": 1}'

Monitor RBF Activity

# Get mempool info

curl -X POST http://localhost:8332 \

-H "Content-Type: application/json" \

-d '{"jsonrpc": "2.0", "method": "getmempoolinfo", "params": [], "id": 1}'

Expected Response:

{

"jsonrpc": "2.0",

"result": {

"size": 1234,

"bytes": 567890,

"usage": 1234567,

"maxmempool": 314572800,

"mempoolminfee": 0.00001,

"minrelaytxfee": 0.00001

},

"id": 1

}

See Also

- RBF and Mempool Policies - Complete configuration guide

- Node Configuration - All configuration options

- Node Operations - Managing your node

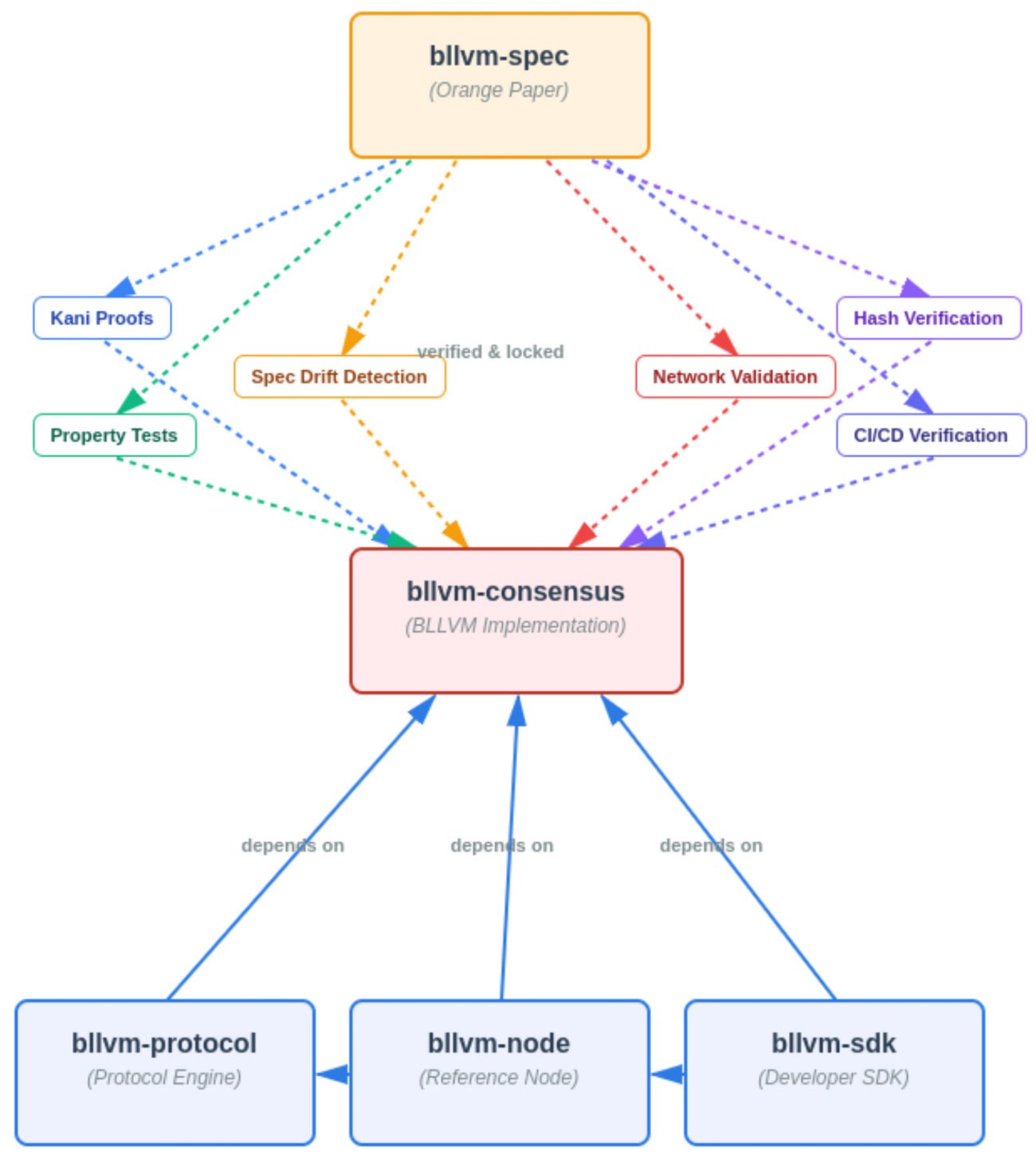

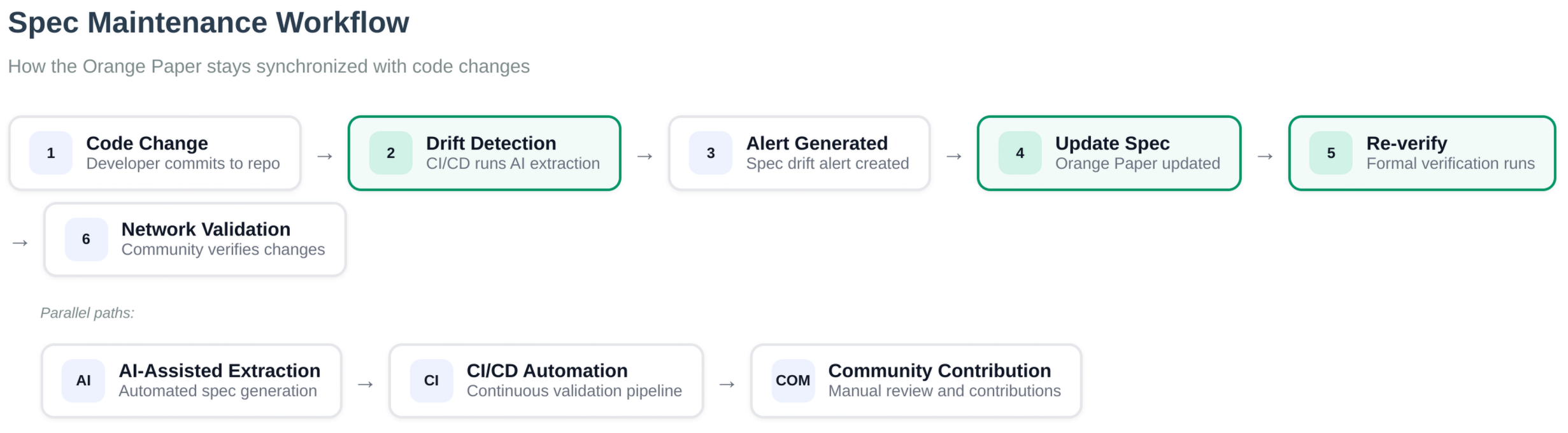

System Overview

Bitcoin Commons is a Bitcoin implementation ecosystem with six tiers building on the Orange Paper mathematical specifications. blvm-consensus and blvm-protocol share the blvm-primitives crate for types, serialization, and crypto. The system implements consensus rules directly from the spec, provides protocol abstraction, delivers a full node implementation, and includes a developer SDK.

6-Tier Component Architecture

Mathematical Foundation] T2[blvm-consensus

Pure Math Implementation] T3[blvm-protocol

Protocol Abstraction] T4[blvm-node

Full Node Implementation] T5[blvm-sdk

Developer Toolkit] T6[blvm-commons

Governance Enforcement] T1 -->|direct implementation| T2 T2 -->|protocol abstraction| T3 T3 -->|full node| T4 T4 -->|ergonomic API| T5 T5 -->|cryptographic governance| T6 style T1 fill:#f9f,stroke:#333,stroke-width:2px style T2 fill:#bbf,stroke:#333,stroke-width:2px style T3 fill:#bfb,stroke:#333,stroke-width:2px style T4 fill:#fbf,stroke:#333,stroke-width:2px style T5 fill:#ffb,stroke:#333,stroke-width:2px style T6 fill:#fbb,stroke:#333,stroke-width:2px

BLVM Stack Architecture

Figure: BLVM stack (marketing image): Orange Paper / blvm-spec as the foundation, blvm-consensus with verification tooling, then blvm-protocol, blvm-node, blvm-sdk, and governance enforcement (blvm-commons). The numbered 6-tier diagram above is the canonical layer list.

Figure: BLVM stack (marketing image): Orange Paper / blvm-spec as the foundation, blvm-consensus with verification tooling, then blvm-protocol, blvm-node, blvm-sdk, and governance enforcement (blvm-commons). The numbered 6-tier diagram above is the canonical layer list.

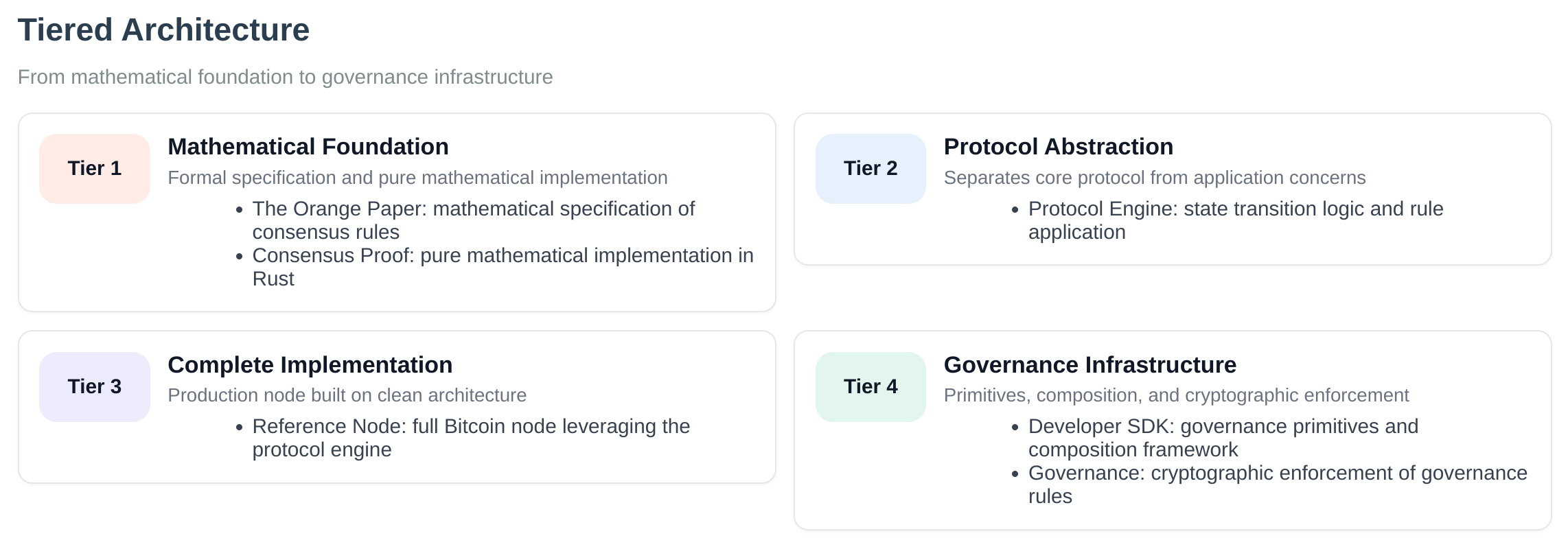

Tiered Architecture

Figure: High-level tiered view (simplified graphic). Canonical numbering is the six layers in the mermaid diagram and section headings above (Orange Paper → consensus → protocol → node → SDK → blvm-commons); this image simplifies the stack for layout.

Figure: High-level tiered view (simplified graphic). Canonical numbering is the six layers in the mermaid diagram and section headings above (Orange Paper → consensus → protocol → node → SDK → blvm-commons); this image simplifies the stack for layout.

Component Overview

Tier 1: Orange Paper (Mathematical Foundation)

- Mathematical specifications for Bitcoin consensus rules

- Source of truth for all implementations

- Timeless, immutable consensus rules

Tier 2: blvm-consensus (Pure Math Implementation)

- Direct implementation of Orange Paper functions

- Formal proofs verify mathematical correctness

- Side-effect-free, deterministic functions

- Consensus-critical dependencies pinned to exact versions

Code: README.md

Tier 3: blvm-protocol (Protocol Abstraction)

- Bitcoin protocol abstraction for multiple variants

- Supports mainnet, testnet, regtest

- Commons-specific protocol extensions (UTXO commitments, ban list sharing)

- BIP implementations (BIP152, BIP157, BIP158, BIP173/350/351)

Code: README.md

Tier 4: blvm-node (Node Implementation)

- Reference full node (non-consensus infrastructure: storage, P2P, RPC, modules); operational hardening required for real deployments

- Storage layer (database abstraction with multiple backends)

- Network manager (multi-transport: TCP, QUIC, Iroh)

- RPC server (JSON-RPC 2.0, conventional Bitcoin RPC surface)

- Module system (process-isolated runtime modules)

- Payment processing with CTV (CheckTemplateVerify) support

- RBF and mempool policies (4 configurable modes)

- Advanced indexing (address and value range indexing)

- Mining coordination (Stratum V2, merge mining)

- P2P governance message relay

- Governance integration (webhooks, user signaling)

- ZeroMQ notifications (optional)

Code: README.md

Tier 5: blvm-sdk (Developer Toolkit)

- Governance primitives (key management, signatures, multisig)

- CLI tools (blvm-keygen, blvm-sign, blvm-verify)

- Composition framework (declarative node composition)

- Bitcoin-compatible signing standards

Code: README.md

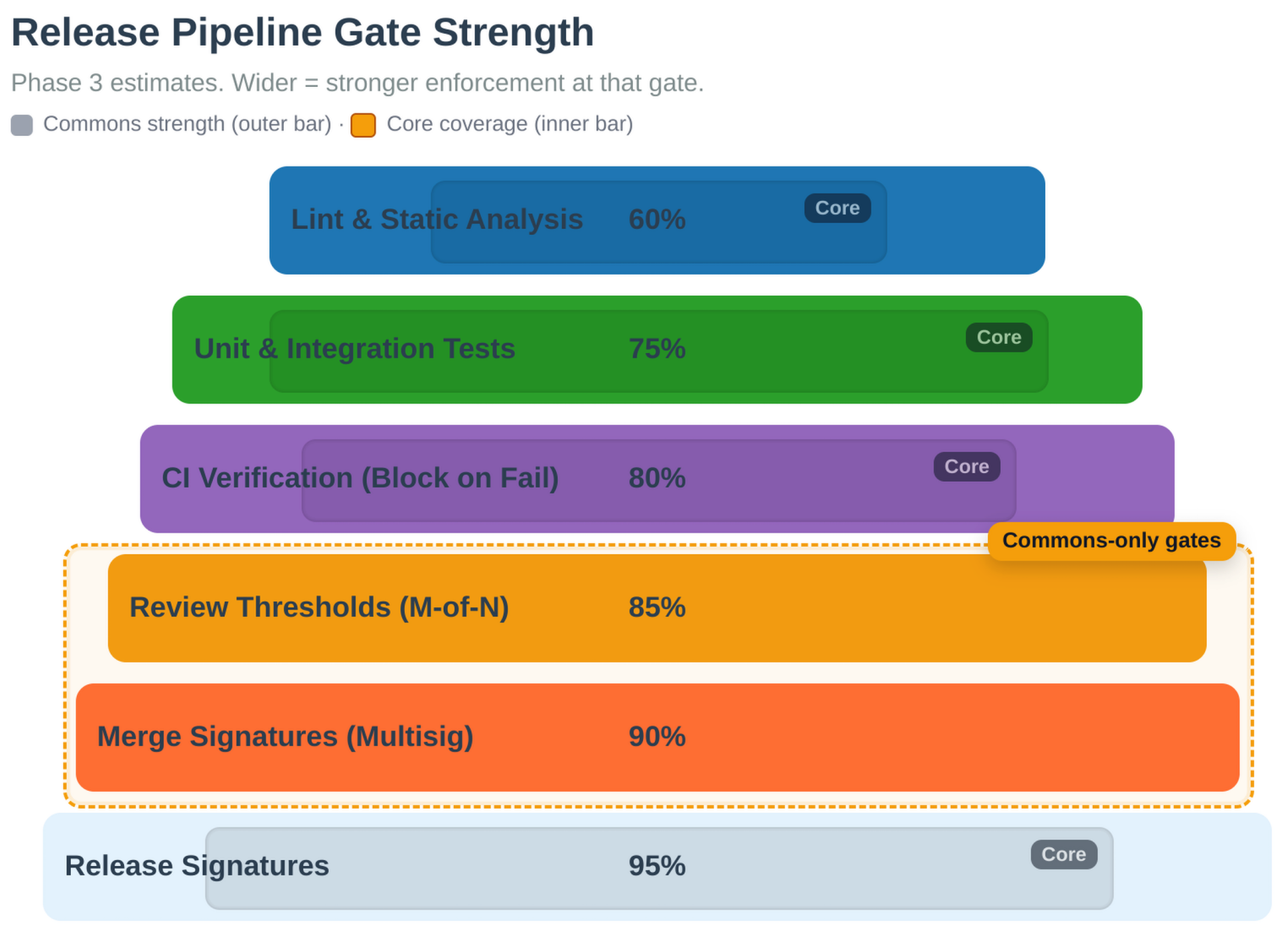

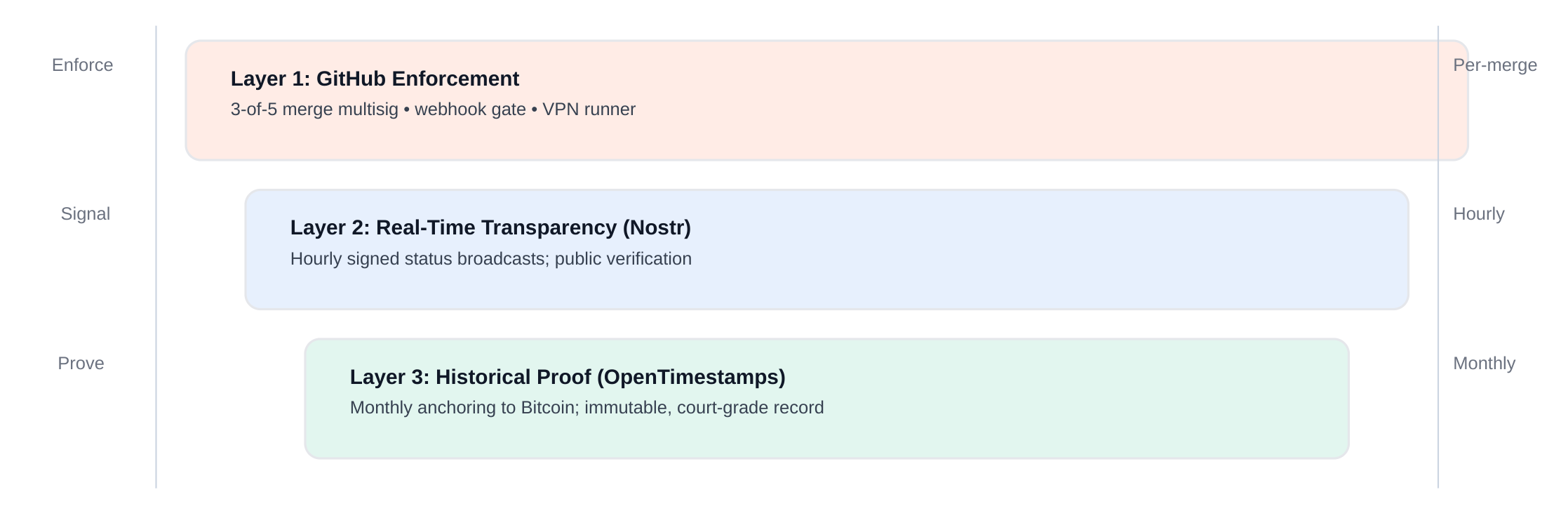

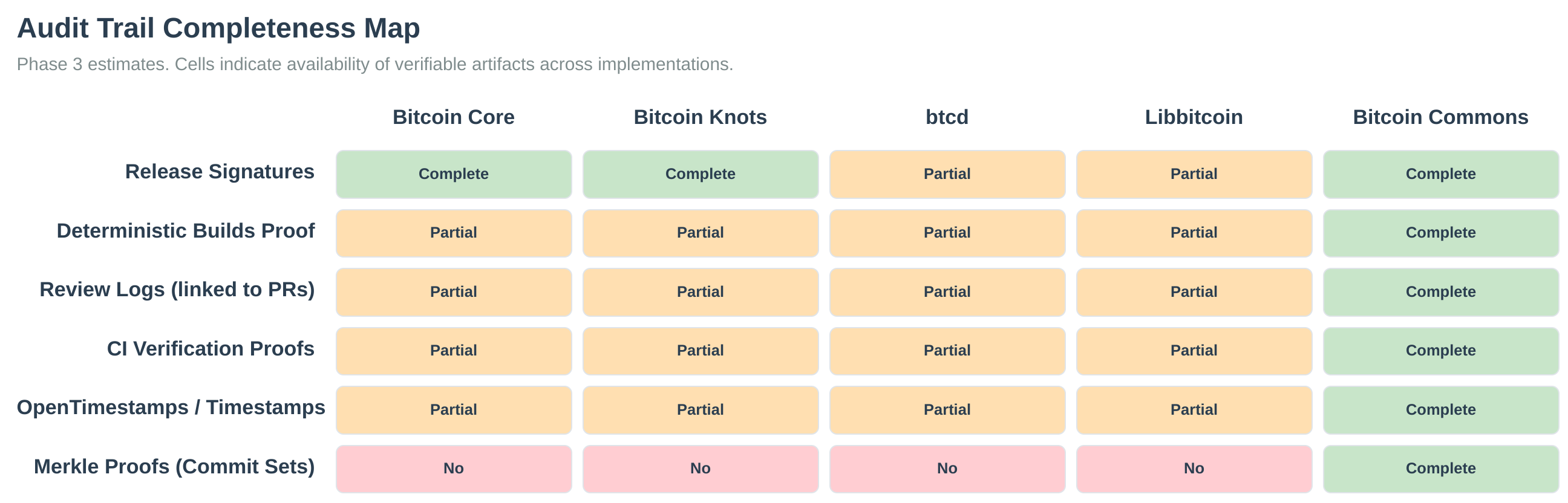

Tier 6: blvm-commons (Governance Enforcement)

- GitHub App for governance enforcement

- Cryptographic signature verification

- Multisig threshold enforcement

- Audit trail management

- OpenTimestamps integration

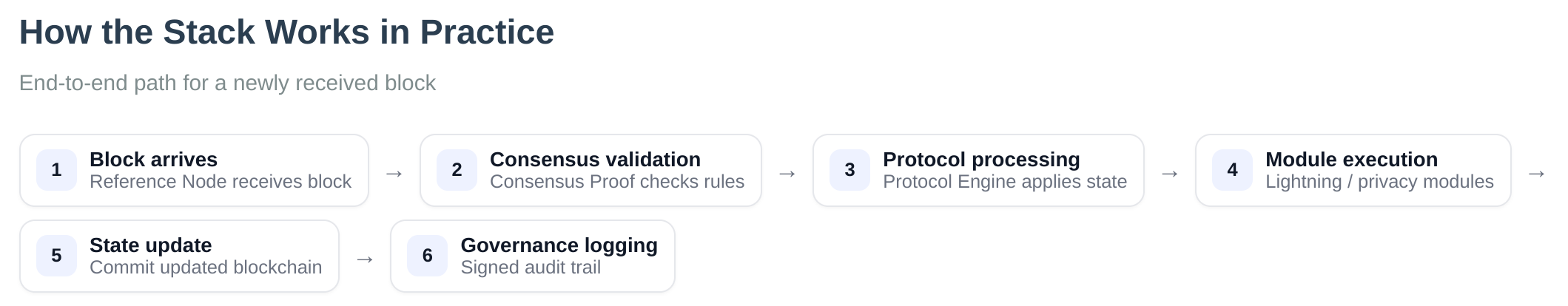

Data Flow

- Orange Paper provides mathematical consensus specifications

- blvm-consensus directly implements mathematical functions

- blvm-protocol layers protocol parameters and network behavior on blvm-consensus types and validation

- blvm-node uses blvm-protocol and blvm-consensus for validation

- blvm-sdk provides governance primitives

- blvm-commons uses blvm-sdk and blvm-protocol for governance enforcement and shared types

Cross-Layer Validation

- Dependencies between layers are strictly enforced in the crate graph (application layers do not reimplement consensus).

- Consensus rule modifications are prevented in application layers by design (validation calls into blvm-consensus).

- The Orange Paper is the specification; blvm-consensus is checked with formal verification, tests, and review—not a single proof of the entire spec in one step.

- Version coordination (Cargo / release sets) keeps compatible crate versions together.

Key Features

Mathematical Rigor

- Direct implementation of Orange Paper specifications

- Formal verification with BLVM Specification Lock

- Property-based testing for mathematical invariants

- Formal proofs verify critical consensus functions

Protocol Abstraction

- Multiple Bitcoin variants (mainnet, testnet, regtest)

- Commons-specific protocol extensions

- BIP implementations (BIP152, BIP157, BIP158)

- Protocol evolution support

Node and operational features

- Full Bitcoin node–style functionality (when configured and secured appropriately)

- Performance optimizations (PGO, parallel validation)

- Multiple storage backends with automatic fallback

- Multi-transport networking (TCP, QUIC, Iroh)

- Payment processing infrastructure

- REST API alongside JSON-RPC

Governance Infrastructure

- Cryptographic governance primitives

- Multisig threshold enforcement

- Transparent audit trails

- Forkable governance rules

See Also

- Component Relationships - Detailed component interactions

- Design Philosophy - Core design principles

- Module System - Module system architecture

- Node Overview - Node implementation details

- Consensus Overview - Consensus layer details

- Protocol Overview - Protocol layer details

Component Relationships

BLVM implements a 6-tier layered architecture where each tier builds upon the previous one.

Dependency Graph

Edges point from a crate toward a crate it depends on (library import direction). The Orange Paper is not a Rust crate; it informs consensus (dotted).

blvm-primitives (types, serialization, crypto) sits under blvm-consensus and blvm-protocol; it is not shown as its own tier here.

Layer Descriptions

Tier 1: Orange Paper (blvm-spec)

- Purpose: Mathematical foundation - timeless consensus rules

- Type: Documentation and specification

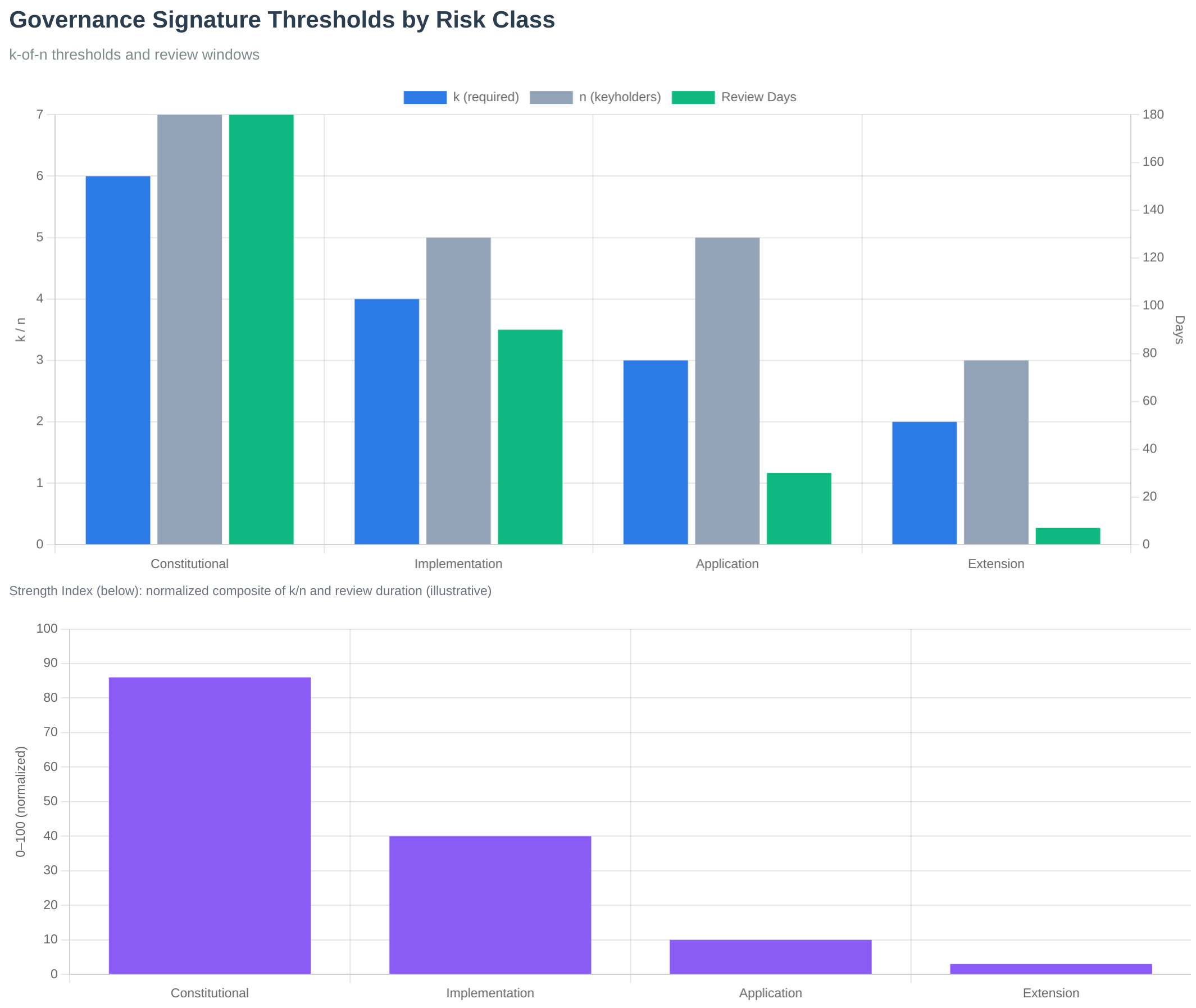

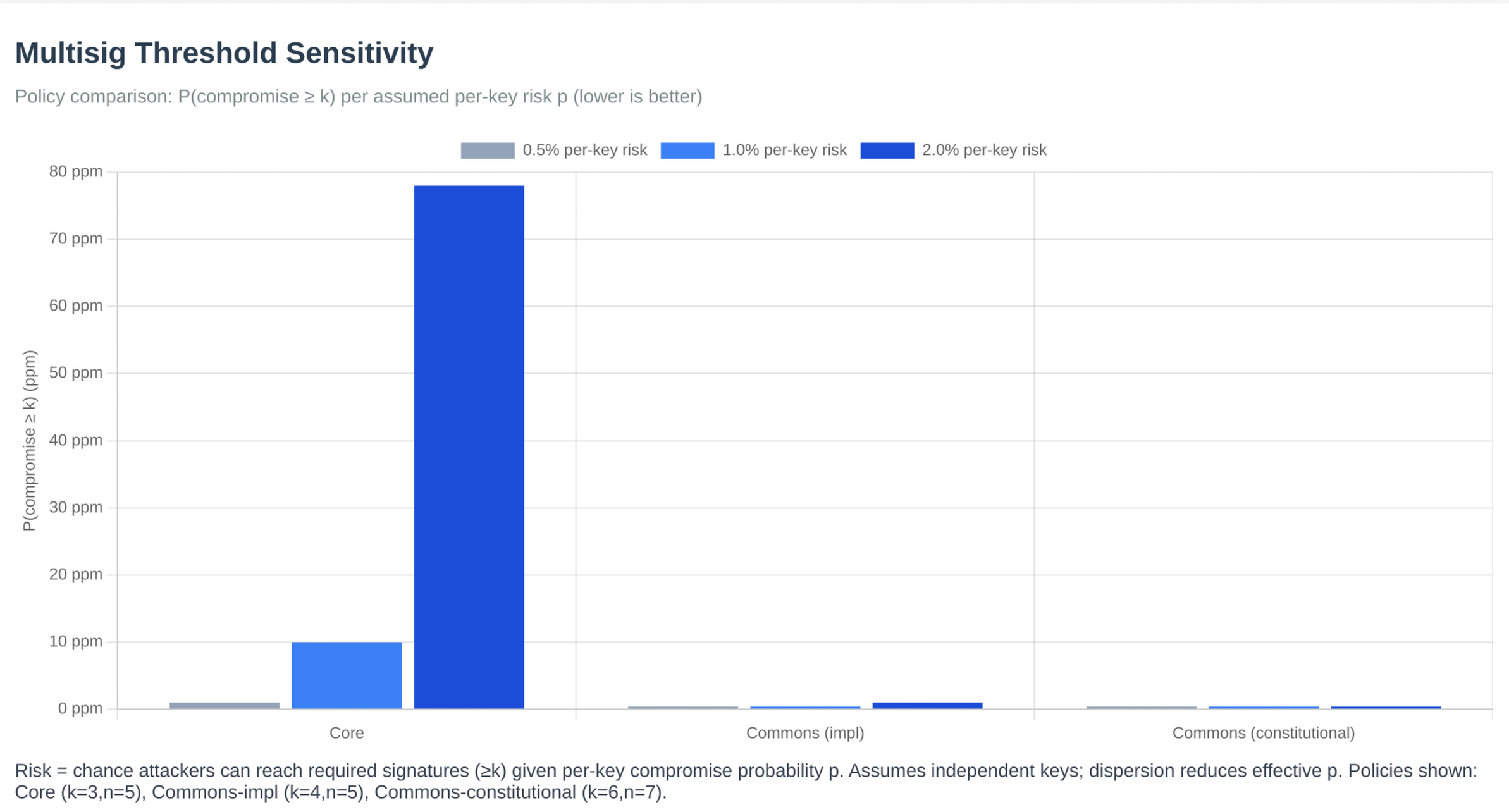

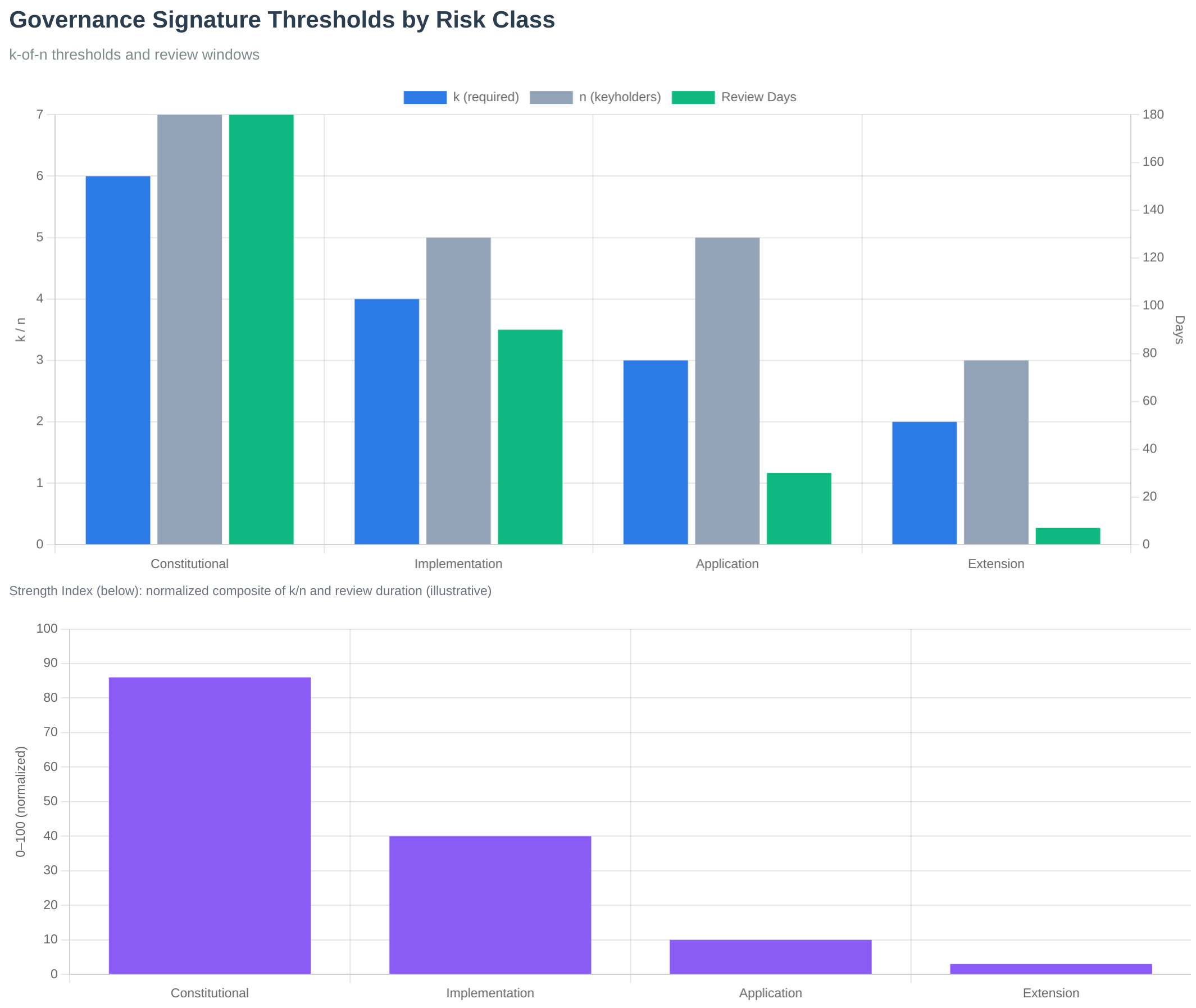

- Governance: Layer 1 (Constitutional - 6-of-7 maintainers, 180 days, see Layer-Tier Model)

Tier 2: Consensus Layer (blvm-consensus)

- Purpose: Pure mathematical implementation of Orange Paper functions

- Type: Rust library (pure functions, no side effects)

- Dependencies: blvm-primitives (shared types, serialization, consensus crypto); pinned transitive crates as in

Cargo.toml - Governance: Layer 2 (Constitutional - 6-of-7 maintainers, 180 days, see Layer-Tier Model)

- Key Functions: CheckTransaction, ConnectBlock, EvalScript, VerifyScript

Tier 3: Protocol Layer (blvm-protocol)

- Purpose: Protocol abstraction layer for multiple Bitcoin variants

- Type: Rust library

- Dependencies: blvm-consensus and blvm-primitives (exact / ranged versions per

Cargo.toml) - Governance: Layer 3 (Implementation - 4-of-5 maintainers, 90 days, see Layer-Tier Model)

- Supports: mainnet, testnet, regtest, and additional protocol variants

Tier 4: Node Implementation (blvm-node)

- Purpose: Minimal reference full node—non-consensus infrastructure only; deploy with security hardening and check System Status for governance and maturity

- Type: Rust binaries (full node)

- Dependencies: blvm-protocol, blvm-consensus (exact versions)

- Governance: Layer 4 (Application - 3-of-5 maintainers, 60 days, see Layer-Tier Model)

- Components: Block validation, storage, P2P networking, RPC, mining

Tier 5: Developer SDK (blvm-sdk)

- Purpose: Developer toolkit and governance cryptographic primitives

- Type: Rust library and CLI tools

- Dependencies: Declares

blvm-protocolandblvm-consensuson crates.io (for composition and module tooling); optionalblvm-nodevia the defaultnodefeature. See the crateCargo.toml. - Governance: Layer 5 (Extension - 2-of-3 maintainers, 14 days, see Layer-Tier Model)

- Components: Key generation, signing, verification, multisig operations

Tier 6: Governance Infrastructure (blvm-commons)

- Purpose: Cryptographic governance enforcement

- Type: Rust service (GitHub App / server binaries)

- Dependencies:

blvm-sdk,blvm-protocol(seeblvm-commons/Cargo.toml) - Governance: Layer 5 (Extension - 2-of-3 maintainers, 14 days)

- Components: GitHub integration, signature verification, status checks

Data flow

The dependency graph above is the accurate picture for crate dependencies. At runtime, blocks and transactions flow through the node, which calls into protocol and consensus libraries to validate; the Orange Paper remains the specification those libraries implement.

Figure: Operational view (IBD, validation, modules, governance). For which crate depends on which, use the dependency graph in this page.

Figure: Operational view (IBD, validation, modules, governance). For which crate depends on which, use the dependency graph in this page.

- Orange Paper specifies consensus rules.

- blvm-consensus implements those rules (pure functions).

- blvm-protocol layers network parameters, wire helpers, and protocol policy on top of consensus types.

- blvm-node runs networking, storage, RPC, and orchestration; validation calls into protocol + consensus.

- blvm-sdk supplies governance crypto, composition, and (optional) node integration for modules.

- blvm-commons runs governance enforcement services using blvm-sdk and blvm-protocol types.

Cross-Layer Validation

- Dependencies between layers are strictly enforced in the crate graph (no application layer should reimplement consensus).

- Consensus rule modifications are prevented in application layers by design (validation calls into blvm-consensus).

- The Orange Paper is the specification; blvm-consensus is checked with formal verification, tests, and review—not a single “one-shot” equivalence proof of the whole spec.

- Version coordination (Cargo / release sets) keeps compatible crate versions together.

See Also

- System Overview - High-level architecture overview

- Design Philosophy - Core design principles

- Consensus Architecture - Consensus layer details

- Protocol Architecture - Protocol layer details

- Node Overview - Node implementation details

Design Philosophy

BLVM is built on core principles that guide all design decisions.

Core Principles

1. Mathematical Correctness First

- Direct implementation of Orange Paper specifications

- No interpretation or approximation

- BLVM Specification Lock, tests, and review validate consensus-critical code against the Orange Paper

- Pure functions with no side effects (where the design allows)

2. Layered Architecture

- Clear separation of concerns

- Each layer builds on previous layers

- No circular dependencies

- Independent versioning where possible

3. Zero Consensus Re-implementation

- All consensus logic comes from blvm-consensus

- Application layers cannot modify consensus rules

- Protocol abstraction enables variants without consensus changes

- Clear security boundaries

4. Cryptographic Governance

- Apply Bitcoin’s cryptographic primitives to governance

- Make power visible, capture expensive, exit cheap

- Multi-signature requirements for all changes

- Transparent audit trails

5. User Sovereignty

- Users control what software they run

- No forced network upgrades

- Forkable governance model

Design Decisions

Why Pure Functions?

Pure functions are:

- Testable: Same input always produces same output

- Verifiable: Mathematical properties can be proven

- Composable: Can be combined without side effects

- Predictable: No hidden state or dependencies

Why Layered Architecture?

Layered architecture provides:

- Separation of Concerns: Each layer has a single responsibility

- Reusability: Lower layers can be used independently

- Testability: Each layer can be tested in isolation

- Evolution: Protocol can evolve without consensus changes

Why Formal Verification?

BLVM Specification Lock adds Z3-checked proofs on spec-locked consensus code, alongside tests and review:

- Correctness: Machine-checked linkage to Orange Paper contracts (PROOF_LIMITATIONS.md)

- Defense in depth: Fuzzing and integration tests exercise behavior; spec-lock discharges proofs on spec-locked functions

- Auditability: CI and optional OpenTimestamps on verification artifacts

Why Cryptographic Governance?

Cryptographic governance provides:

- Transparency: All decisions are cryptographically verifiable

- Accountability: Clear audit trail of all actions

- Resistance to Capture: Multi-signature requirements make capture expensive

- User Protection: Forkable governance allows users to exit if they disagree

Trade-offs

Performance vs Correctness

- Choice: Correctness first

- Rationale: Consensus violations are catastrophic

- Mitigation: Optimize after verification

Flexibility vs Safety

- Choice: Safety first

- Rationale: Bitcoin consensus must be stable

- Mitigation: Protocol abstraction enables experimentation

Simplicity vs Features

- Choice: Simplicity where possible

- Rationale: Complex code is harder to verify

- Mitigation: Add features only when necessary

Design Evolution

BLVM is designed to support Bitcoin’s evolution for the next 500 years:

- Protocol Evolution: New variants without consensus changes

- Feature Addition: New capabilities through protocol abstraction

- Governance Evolution: Governance rules can evolve through proper process

- User Choice: Multiple implementations can coexist

See Also

- System Overview - High-level architecture overview

- Component Relationships - Layer dependencies and data flow

- Consensus Architecture - Mathematical correctness implementation

- Governance Overview - Cryptographic governance system

- Orange Paper - Mathematical foundation

Module System

Overview

The module system supports optional features (Lightning Network, merge mining, privacy enhancements) without affecting consensus or base node stability. Modules run in separate processes with IPC communication, providing security through isolation.

Available Modules

The following modules are available for blvm-node:

- Lightning Network Module - Lightning Network payment processing, invoice verification, payment routing, and channel management

- Commons Mesh Module - Payment-gated mesh networking with routing fees, traffic classification, and anti-monopoly protection

- Stratum V2 Module - Stratum V2 mining protocol support and mining pool management

- Datum Module - DATUM Gateway mining protocol

- Mining OS Module - MiningOS integration

- Merge Mining Module - Merge mining available as separate paid plugin (

blvm-merge-mining)

For detailed documentation on each module, see the Modules section.

Writing modules: Use the SDK declarative style (blvm-sdk attribute macros and run_module!) to define CLI, RPC, and event handling in one impl block without manual IPC loops; see Module Development. Alternatively use the integration API or low-level IPC for custom control.

Architecture

Process Isolation

Each module runs in a separate process with isolated memory. The base node consensus state is protected and read-only to modules.

Protected, Read-Only] MM[Module Manager

Orchestration] NM[Network Manager] SM[Storage Manager] RM[RPC Manager] end subgraph "Module Process 1

blvm-lightning" LS[Lightning State

Isolated Memory] SB1[Sandbox

Resource Limits] end subgraph "Module Process 2

blvm-mesh" MS[Mesh State

Isolated Memory] SB2[Sandbox

Resource Limits] end subgraph "Module Process 3

blvm-stratum-v2" SS[Stratum V2 State

Isolated Memory] SB3[Sandbox

Resource Limits] end MM -->|IPC Unix Sockets| LS MM -->|IPC Unix Sockets| MS MM -->|IPC Unix Sockets| SS CS -.->|Read-Only Access| MM NM --> MM SM --> MM RM --> MM style CS fill:#fbb,stroke:#333,stroke-width:3px style MM fill:#bbf,stroke:#333,stroke-width:2px style LS fill:#bfb,stroke:#333,stroke-width:2px style MS fill:#bfb,stroke:#333,stroke-width:2px style SS fill:#bfb,stroke:#333,stroke-width:2px

Code: mod.rs

Core Components

ModuleManager

Orchestrates all modules, handling lifecycle, runtime loading/unloading/reloading, and coordination.

Features:

- Module discovery and loading

- Process spawning and monitoring

- IPC server management

- Event subscription management

- Dependency resolution

- Registry integration

Code: manager.rs

Process Isolation

Modules run in separate processes via ModuleProcessSpawner:

- Separate memory space

- Isolated execution environment

- Resource limits enforced

- Crash containment

Code: spawner.rs

IPC Communication

Modules communicate with the base node via Unix domain sockets (Unix) or named pipes (Windows):

- Request/response protocol

- Event subscription system (

SubscribeEvents/EventType— node → module notifications) - Correlation IDs for async operations

- Type-safe message serialization

- Targeted node control (module → node):

NodeAPI/ IPC also exposes bounded writes that are not consensus changes — e.g. P2P serve denylists (block/txgetdatapolicy),get_sync_status,ban_peer, and block-serve maintenance mode. Details: Module IPC Protocol, Module development.

Code: protocol.rs

Security Sandbox

Modules run in sandboxed environments with:

- Resource limits (CPU, memory, file descriptors)

- Filesystem restrictions

- Network restrictions

- Permission-based API access

Code: network.rs

Permission System

Modules request capabilities that are validated before API access. Capabilities use snake_case in module.toml (e.g., read_blockchain) and map to Permission enum variants (e.g., ReadBlockchain).

Core Permissions:

read_blockchain/ReadBlockchain- Read-only blockchain access (blocks, headers, transactions)read_utxo/ReadUTXO- Query UTXO set (read-only)read_chain_state/ReadChainState- Query chain state (height, tip)subscribe_events/SubscribeEvents- Subscribe to node eventssend_transactions/SendTransactions- Submit transactions to mempool (future: may be restricted)

Mempool & Network Permissions:

read_mempool/ReadMempool- Read mempool data (transactions, size, fee estimates)read_network/ReadNetwork- Read network data (peers, stats)network_access/NetworkAccess- Send network packets (mesh packets, etc.)

Lightning & Payment Permissions:

read_lightning/ReadLightning- Read Lightning network dataread_payment/ReadPayment- Read payment data

Storage Permissions:

read_storage/ReadStorage- Read from module storagewrite_storage/WriteStorage- Write to module storagemanage_storage/ManageStorage- Manage storage (create/delete trees, manage quotas)

Filesystem Permissions:

read_filesystem/ReadFilesystem- Read files from module data directorywrite_filesystem/WriteFilesystem- Write files to module data directorymanage_filesystem/ManageFilesystem- Manage filesystem (create/delete directories, manage quotas)

RPC & Timers Permissions:

register_rpc_endpoint/RegisterRpcEndpoint- Register RPC endpointsmanage_timers/ManageTimers- Manage timers and scheduled tasks

Metrics Permissions:

report_metrics/ReportMetrics- Report metricsread_metrics/ReadMetrics- Read metrics

Module Communication Permissions:

discover_modules/DiscoverModules- Discover other modulespublish_events/PublishEvents- Publish events to other modulescall_module/CallModule- Call other modules’ APIsregister_module_api/RegisterModuleApi- Register module API for other modules to call

Code: permissions.rs

Module Lifecycle

Discovery → Verification → Loading → Execution → Monitoring

│ │ │ │ │

│ │ │ │ │

▼ ▼ ▼ ▼ ▼

Registry Signer Loader Process Monitor

Discovery

Modules discovered through:

- Local filesystem (

modules/directory) - Module registry (REST API)

- Manual installation

Code: discovery.rs

Verification

Each module verified through:

- Hash verification (binary integrity)

- Signature verification (multisig maintainer signatures)

- Permission checking (capability validation)

- Compatibility checking (version requirements)

Code: manifest_validator.rs

Loading

Module loaded into isolated process:

- Sandbox creation (resource limits)

- IPC connection establishment

- API subscription setup

Code: manager.rs

Execution

Module runs in isolated process:

- Separate memory space

- Resource limits enforced

- IPC communication only

- Event subscription active

Monitoring

Module health monitored:

- Process status tracking

- Resource usage monitoring

- Error tracking

- Crash isolation

Code: monitor.rs

Security Model

Consensus Isolation

Modules cannot:

- Modify consensus rules

- Modify UTXO set

- Access node private keys

- Bypass security boundaries

- Affect other modules

Guarantee: Module failures are isolated and cannot affect consensus.

Crash Containment

Module crashes are isolated and do not affect the base node. The ModuleProcessMonitor detects crashes and automatically removes failed modules.

Code: manager.rs

Security Flow

Module Binary

│

├─→ Hash Verification ──→ Integrity Check

│

├─→ Signature Verification ──→ Multisig Check ──→ Maintainer Verification

│

├─→ Permission Check ──→ Capability Validation

│

└─→ Sandbox Creation ──→ Resource Limits ──→ Isolation

Module Manifest

Module manifests use TOML format:

# Module Identity

name = "lightning-network"

version = "1.2.3"

description = "Lightning Network implementation"

author = "Alice <alice@example.com>"

# Governance

[governance]

tier = "application"

maintainers = ["alice", "bob", "charlie"]

threshold = "2-of-3"

review_period_days = 14

# Signatures

[signatures]

maintainers = [

{ name = "alice", key = "02abc...", signature = "..." },

{ name = "bob", key = "03def...", signature = "..." }

]

threshold = "2-of-3"

# Binary

[binary]

hash = "sha256:abc123..."

size = 1234567

download_url = "https://registry.bitcoincommons.org/modules/lightning-network/1.2.3"

# Dependencies

[dependencies]

"blvm-node" = ">=1.0.0"

"another-module" = ">=0.5.0"

# Compatibility

[compatibility]

min_consensus_version = "1.0.0"

min_protocol_version = "1.0.0"

min_node_version = "1.0.0"

tested_with = ["1.0.0", "1.1.0"]

# Capabilities

capabilities = [

"read_blockchain",

"subscribe_events"

]

Code: manifest.rs

API Hub

The ModuleApiHub routes API requests from modules to the appropriate handlers:

- Blockchain API (blocks, headers, transactions)

- Governance API (proposals, votes)

- Communication API (P2P messaging)

Code: hub.rs

Event System

The module event system provides a comprehensive, consistent, and reliable way for modules to receive notifications about node state changes, blockchain events, and system lifecycle events.

Event Subscription

Modules subscribe to events they need during initialization:

#![allow(unused)]

fn main() {

let event_types = vec![

EventType::NewBlock,

EventType::NewTransaction,

EventType::ModuleLoaded,

EventType::ConfigLoaded,

];

client.subscribe_events(event_types).await?;

}Event Categories

Core Blockchain Events:

NewBlock- Block connected to chainNewTransaction- Transaction in mempoolBlockDisconnected- Block disconnected (reorg)ChainReorg- Chain reorganization

Payment Events:

PaymentRequestCreated- Payment request createdPaymentSettled- Payment settled (confirmed on-chain)PaymentFailed- Payment failedPaymentVerified- Lightning payment verifiedPaymentRouteFound- Payment route discoveredPaymentRouteFailed- Payment routing failedChannelOpened- Lightning channel openedChannelClosed- Lightning channel closed

Mining Events:

BlockMined- Block mined successfullyBlockTemplateUpdated- Block template updatedMiningDifficultyChanged- Mining difficulty changedMiningJobCreated- Mining job createdShareSubmitted- Mining share submittedMergeMiningReward- Merge mining reward receivedMiningPoolConnected- Mining pool connectedMiningPoolDisconnected- Mining pool disconnected

Mesh Networking Events:

MeshPacketReceived- Mesh packet received from network

Stratum V2 Events:

StratumV2MessageReceived- Stratum V2 message received from network

Module Lifecycle Events:

ModuleLoaded- Module loaded (published after subscription)ModuleUnloaded- Module unloadedModuleCrashed- Module crashedModuleDiscovered- Module discoveredModuleInstalled- Module installedModuleUpdated- Module updatedModuleRemoved- Module removed

Configuration Events:

ConfigLoaded- Node configuration loaded/changed

Node Lifecycle Events:

NodeStartupCompleted- Node fully operationalNodeShutdown- Node shutting downNodeShutdownCompleted- Shutdown complete

Maintenance Events:

DataMaintenance- Unified cleanup/flush event (replaces StorageFlush + DataCleanup)MaintenanceStarted- Maintenance startedMaintenanceCompleted- Maintenance completedHealthCheck- Health check performed

Resource Management Events:

DiskSpaceLow- Disk space lowResourceLimitWarning- Resource limit warning

Governance Events:

GovernanceProposalCreated- Proposal createdGovernanceProposalVoted- Vote castGovernanceProposalMerged- Proposal mergedGovernanceForkDetected- Governance fork detectedWebhookSent- Webhook sentWebhookFailed- Webhook delivery failed

Network Events:

PeerConnected- Peer connectedPeerDisconnected- Peer disconnectedPeerBanned- Peer bannedPeerUnbanned- Peer unbannedMessageReceived- Network message receivedMessageSent- Network message sentBroadcastStarted- Broadcast startedBroadcastCompleted- Broadcast completedRouteDiscovered- Route discoveredRouteFailed- Route failedConnectionAttempt- Connection attempt (success/failure)AddressDiscovered- New peer address discoveredAddressExpired- Peer address expiredNetworkPartition- Network partition detectedNetworkReconnected- Network partition reconnectedDoSAttackDetected- DoS attack detectedRateLimitExceeded- Rate limit exceeded

Consensus Events:

BlockValidationStarted- Block validation startedBlockValidationCompleted- Block validation completed (success/failure)ScriptVerificationStarted- Script verification startedScriptVerificationCompleted- Script verification completedUTXOValidationStarted- UTXO validation startedUTXOValidationCompleted- UTXO validation completedDifficultyAdjusted- Network difficulty adjustedSoftForkActivated- Soft fork activated (SegWit, Taproot, CTV, etc.)SoftForkLockedIn- Soft fork locked in (BIP9)ConsensusRuleViolation- Consensus rule violation detected

Sync Events:

HeadersSyncStarted- Headers sync startedHeadersSyncProgress- Headers sync progress updateHeadersSyncCompleted- Headers sync completedBlockSyncStarted- Block sync started (IBD)BlockSyncProgress- Block sync progress updateBlockSyncCompleted- Block sync completed

Mempool Events:

MempoolTransactionAdded- Transaction added to mempoolMempoolTransactionRemoved- Transaction removed from mempoolFeeRateChanged- Fee rate changed

Additional Event Categories:

- Dandelion++ Events (DandelionStemStarted, DandelionStemAdvanced, DandelionFluffed, etc.)

- Compact Blocks Events (CompactBlockReceived, BlockReconstructionStarted, etc.)

- FIBRE Events (FibreBlockEncoded, FibreBlockSent, FibrePeerRegistered)

- Package Relay Events (PackageReceived, PackageRejected)

- UTXO Commitments Events (UtxoCommitmentReceived, UtxoCommitmentVerified)

- Ban List Sharing Events (BanListShared, BanListReceived)

For a complete list of all event types, see EventType enum.

Event Delivery Guarantees

At-Most-Once Delivery:

- Events are delivered at most once per subscriber

- If channel is full, event is dropped (not retried)

- If channel is closed, module is removed from subscriptions

Best-Effort Delivery:

- Events are delivered on a best-effort basis

- No guaranteed delivery (modules can be slow/dead)

- Statistics track delivery success/failure rates

Ordering Guarantees:

- Events are delivered in order per module (single channel)

- No cross-module ordering guarantees

- ModuleLoaded events are ordered: subscription → ModuleLoaded

Event Timing and Consistency

ModuleLoaded Event Timing:

ModuleLoadedevents are only published AFTER a module has subscribed (after startup is complete)- This ensures modules are fully ready before receiving ModuleLoaded events

- Hotloaded modules automatically receive all already-loaded modules when subscribing

Event Flow:

- Module process is spawned

- Module connects via IPC and sends Handshake

- Module sends

SubscribeEventsrequest - At subscription time:

- Module receives

ModuleLoadedevents for all already-loaded modules (hotloaded modules get existing modules) ModuleLoadedis published for the newly subscribing module (if it’s loaded)

- Module receives

- Module is now fully operational

Event Delivery Reliability

Channel Buffering:

- 100-event buffer per module (prevents unbounded memory growth)

- Non-blocking delivery (publisher never blocks)

- Channel full events are tracked in statistics

Error Handling:

- Channel Full: Event dropped with warning, module subscription NOT removed (module is slow, not dead)

- Channel Closed: Module subscription removed, statistics track failed delivery

- Serialization Errors: Event dropped with warning, module subscription NOT removed

Delivery Statistics:

- Track success/failure/channel-full counts per module

- Available via

EventManager::get_delivery_stats() - Useful for monitoring and debugging

Code: events.rs

For detailed event system documentation, see:

- Event System Integration - Complete integration guide

- Event Consistency - Event timing and consistency guarantees

- Janitorial Events - Maintenance and lifecycle events

Module Registry

Modules can be discovered and installed from a module registry:

- REST API client for module discovery

- Binary download and verification

- Dependency resolution

- Signature verification

Code: client.rs

Usage

Loading a Module

#![allow(unused)]

fn main() {

use blvm_node::module::{ModuleManager, ModuleMetadata};

let mut manager = ModuleManager::new(

modules_dir,

data_dir,

socket_dir,

);

manager.start(socket_path, node_api).await?;

manager.load_module(

"lightning-network",

binary_path,

metadata,

config,

).await?;

}Auto-Discovery

#![allow(unused)]

fn main() {

// Automatically discover and load all modules

manager.auto_load_modules().await?;

}Code: manager.rs

Benefits

- Consensus Isolation: Modules cannot affect consensus rules

- Crash Containment: Module failures don’t affect base node

- Security: Process isolation and permission system

- Extensibility: Add features without consensus changes

- Flexibility: Load/unload modules at runtime

- Governance: Modules subject to governance approval

Use Cases

- Lightning Network: Payment channel management

- Merge Mining: Auxiliary chain support

- Privacy Enhancements: Transaction mixing, coinjoin

- Alternative Mempool Policies: Custom transaction selection

- Smart Contracts: Layer 2 contract execution

Components

The module system includes:

- Process isolation

- IPC communication

- Security sandboxing

- Permission system

- Module registry

- Event system

- API hub

Location: blvm-node/src/module/

IPC Communication

Modules communicate with the node via the Module IPC Protocol:

- Protocol: Length-delimited binary messages over Unix domain sockets

- Message Types: Requests, Responses, Events, Logs

- Security: Process isolation, permission-based API access, resource sandboxing

- Performance: Persistent connections, concurrent requests, correlation IDs

Integration Approaches

There are two approaches for modules to integrate with the node:

1. ModuleIntegration (Recommended for New Modules)

The ModuleIntegration API provides a simplified, unified interface for module integration:

#![allow(unused)]

fn main() {

use blvm_node::module::integration::ModuleIntegration;

// Connect to node (socket_path must be PathBuf)

let socket_path = std::path::PathBuf::from(socket_path);

let mut integration = ModuleIntegration::connect(

socket_path,

module_id,

module_name,

version,

).await?;

// Subscribe to events

integration.subscribe_events(event_types).await?;

// Get NodeAPI

let node_api = integration.node_api();

// Get event receiver

let mut event_receiver = integration.event_receiver();

}Benefits:

- Single unified API for all integration needs

- Automatic handshake and connection management

- Simplified event subscription

- Direct access to NodeAPI and event receiver

Used by: blvm-mesh and its submodules (blvm-onion, blvm-mining-pool, blvm-messaging, blvm-bridge)

2. ModuleClient + NodeApiIpc (Legacy Approach)

The traditional approach uses separate components:

#![allow(unused)]

fn main() {

use blvm_node::module::ipc::client::ModuleIpcClient;

use blvm_node::module::api::node_api::NodeApiIpc;

// Connect to IPC socket

let mut ipc_client = ModuleIpcClient::connect(&socket_path).await?;

// Perform handshake manually

let handshake_request = RequestMessage { /* ... */ };

let response = ipc_client.request(handshake_request).await?;

// Create NodeAPI wrapper

// NodeApiIpc requires Arc<Mutex<ModuleIpcClient>> and module_id

let ipc_client_arc = Arc::new(tokio::sync::Mutex::new(ipc_client));

let node_api = Arc::new(NodeApiIpc::new(ipc_client_arc, "my-module".to_string()));

// Create ModuleClient for event subscription

let mut client = ModuleClient::connect(/* ... */).await?;

client.subscribe_events(event_types).await?;

let mut event_receiver = client.event_receiver();

}Benefits:

- More granular control over IPC communication

- Direct access to IPC client for custom requests

- Established, stable API

Used by: blvm-lightning, blvm-stratum-v2, blvm-datum, blvm-miningos

Migration: New modules should use ModuleIntegration. Existing modules can continue using ModuleClient + NodeApiIpc, but migration to ModuleIntegration is recommended for consistency and simplicity.

For detailed protocol documentation, see Module IPC Protocol.

See Also

- Module IPC Protocol - Complete IPC protocol documentation

- Modules Overview - Overview of all available modules

- Lightning Network Module - Lightning Network payment processing

- Commons Mesh Module - Payment-gated mesh networking

- Stratum V2 Module - Stratum V2 mining protocol

- Datum Module - DATUM Gateway mining protocol

- Mining OS Module - MiningOS integration

- Module Development - Guide for developing custom modules

Module IPC Protocol

Overview

The Module IPC (Inter-Process Communication) protocol enables secure communication between process-isolated modules and the base node. Modules run in separate processes and communicate via Unix domain sockets using a length-delimited binary message protocol.

Architecture

┌─────────────────────────────────────┐

│ blvm-node Process │

│ ┌───────────────────────────────┐ │

│ │ Module IPC Server │ │

│ │ (Unix Domain Socket) │ │

│ └───────────────────────────────┘ │

└─────────────────────────────────────┘

│ IPC Protocol

│ (Unix Domain Socket)

│

┌─────────────┴─────────────────────┐

│ Module Process (Isolated) │

│ ┌───────────────────────────────┐ │

│ │ Module IPC Client │ │

│ │ (Unix Domain Socket) │ │

│ └───────────────────────────────┘ │

└─────────────────────────────────────┘

Protocol Format

Message Encoding

Messages use length-delimited binary encoding:

[4-byte length][message payload]

- Length: 4-byte little-endian integer (message size)

- Payload: Binary-encoded message (bincode serialization)

Code: mod.rs

Message Types

The protocol supports four message types:

- Request: Module → Node (API calls)

- Response: Node → Module (API responses)

- Event: Node → Module (event notifications)

- Log: Module → Node (logging)

Code: protocol.rs

Message Structure

Request Message

#![allow(unused)]

fn main() {

pub struct RequestMessage {

pub correlation_id: CorrelationId,

pub request_type: MessageType,

pub payload: RequestPayload,

}

}Request types (representative):

Reads and subscriptions include GetBlock, GetBlockHeader, GetTransaction, GetChainTip, GetBlockHeight, GetUTXO, SubscribeEvents, GetMempoolTransactions, GetNetworkStats, GetNetworkPeers, GetChainInfo, and many others (mining, storage, RPC, timers, …).

P2P serve policy & sync (module → node):

MessageType | Role |

|---|---|

MergeBlockServeDenylist | Add block hashes that must not receive full block on getdata (notfound instead). |

GetBlockServeDenylistSnapshot | Bounded snapshot of the block denylist. |

ClearBlockServeDenylist / ReplaceBlockServeDenylist | Clear or replace the full set. |

MergeTxServeDenylist | Same pattern for full tx on getdata. |

GetTxServeDenylistSnapshot | Bounded snapshot of the tx denylist. |

ClearTxServeDenylist / ReplaceTxServeDenylist | Clear or replace the tx set. |

GetSyncStatus | Sync coordinator status (SyncStatus). |

BanPeer | Ban peer by address; optional duration. |

SetBlockServeMaintenanceMode | Refuse all full-block getdata answers when enabled. |

These affect relay/serving only, not consensus validation. See NodeAPI for the Rust surface.

Code: protocol.rs

Response Message

#![allow(unused)]

fn main() {

pub struct ResponseMessage {

pub correlation_id: CorrelationId,

pub payload: ResponsePayload,

}

}Response payload variants carry typed data (blocks, templates, snapshots, booleans, errors, etc.); denylist merges return dedicated merged/snapshot payloads where applicable.

Code: protocol.rs

Event Message

#![allow(unused)]

fn main() {

pub struct EventMessage {

pub event_type: EventType,

pub payload: EventPayload,

}

}Event Types (46+ event types):

- Network events:

PeerConnected,MessageReceived,PeerDisconnected - Payment events:

PaymentRequestCreated,PaymentVerified,PaymentSettled - Chain events:

NewBlock,ChainTipUpdated,BlockDisconnected - Mempool events:

MempoolTransactionAdded,FeeRateChanged,MempoolTransactionRemoved

Code: protocol.rs

Log Message

#![allow(unused)]

fn main() {

pub struct LogMessage {

pub level: LogLevel,

pub message: String,

pub module_id: String,

}

}Log Levels: Error, Warn, Info, Debug, Trace

Code: protocol.rs

Communication Flow

Request-Response Pattern

- Module sends Request: Module sends request message with correlation ID

- Node processes Request: Node processes request and generates response

- Node sends Response: Node sends response with matching correlation ID

- Module receives Response: Module matches response to request using correlation ID

Code: server.rs

Event Subscription Pattern

- Module subscribes: Module sends

SubscribeEventsrequest with event types - Node confirms: Node sends subscription confirmation

- Node publishes Events: Node sends event messages as they occur

- Module receives Events: Module processes events asynchronously

Code: server.rs

Connection Management

Handshake

On connection, modules send a handshake message:

#![allow(unused)]

fn main() {

pub struct HandshakeMessage {

pub module_id: String,

pub capabilities: Vec<String>,

pub version: String,

}

}Code: server.rs

Connection Lifecycle

- Connect: Module connects to Unix domain socket

- Handshake: Module sends handshake, node validates

- Active: Connection active, ready for requests/events

- Disconnect: Connection closed (graceful or error)

Code: server.rs

Security

Process Isolation

- Modules run in separate processes with isolated memory

- No shared memory between node and modules

- Module crashes don’t affect the base node

Code: spawner.rs

Permission System

Modules request capabilities that are validated before API access:

ReadBlockchain- Read-only blockchain accessReadUTXO- Query UTXO set (read-only)ReadChainState- Query chain state (height, tip)SubscribeEvents- Subscribe to node eventsSendTransactions- Submit transactions to mempool

Code: permissions.rs

Sandboxing

Modules run in sandboxed environments with:

- Resource limits (CPU, memory, file descriptors)

- Filesystem restrictions

- Network restrictions (modules cannot open network connections)

- Permission-based API access

Code: mod.rs

Error Handling

Error Types

#![allow(unused)]

fn main() {

pub enum ModuleError {

ConnectionError(String),

ProtocolError(String),

PermissionDenied(String),

ResourceExhausted(String),

Timeout(String),

}

}Code: traits.rs

Error Recovery

- Connection Errors: Automatic reconnection with exponential backoff

- Protocol Errors: Clear error messages, connection termination

- Permission Errors: Detailed error messages, request rejection

- Timeout Errors: Request timeout, connection remains active

Code: client.rs

Performance

Message Serialization

- Format: bincode (binary encoding)

- Size: Compact binary representation

- Speed: Fast serialization/deserialization

Code: protocol.rs

Connection Pooling

- Persistent Connections: Connections remain open for multiple requests

- Concurrent Requests: Multiple requests can be in-flight simultaneously

- Correlation IDs: Match responses to requests asynchronously

Code: client.rs

Implementation Details

IPC Server

The node-side IPC server:

- Listens on Unix domain socket

- Accepts module connections

- Routes requests to NodeAPI implementation

- Publishes events to subscribed modules

Code: server.rs

IPC Client

The module-side IPC client:

- Connects to Unix domain socket

- Sends requests and receives responses

- Subscribes to events

- Handles connection errors

Code: client.rs

See Also

- Module System - Module system architecture

- Module Development - Building modules

- Modules Overview - Available modules

Event System Integration

Overview

The module event system is designed to handle common integration pain points in distributed module architectures. This document covers all integration scenarios, reliability guarantees, and best practices.

Integration Pain Points Addressed

1. Event Delivery Reliability

Problem: Events can be lost if modules are slow or channels are full.

Solution:

- Channel Buffering: 100-event buffer per module (configurable)

- Non-Blocking Delivery: Uses

try_sendto avoid blocking the publisher - Channel Full Handling: Events are dropped with warning (module is slow, not dead)

- Channel Closed Detection: Automatically removes dead modules from subscriptions

- Delivery Statistics: Track success/failure rates per module

Code:

#![allow(unused)]

fn main() {

// EventManager tracks delivery statistics

let stats = event_manager.get_delivery_stats("module_id").await;

// Returns: Option<(successful_deliveries, failed_deliveries, channel_full_count)>

}2. Event Ordering and Timing

Problem: Events might arrive out of order or modules might miss events during startup.

Solution:

- ModuleLoaded Timing: Only published AFTER module subscribes (startup complete)

- Hotloaded Modules: Automatically receive all already-loaded modules when subscribing

- Consistent Ordering: Subscription → ModuleLoaded events (guaranteed order)

Flow:

- Module loads → Recorded in

loaded_modules - Module subscribes → Receives all already-loaded modules

- ModuleLoaded published → After subscription (startup complete)

3. Event Channel Backpressure

Problem: Fast publishers can overwhelm slow consumers.

Solution:

- Bounded Channels: 100-event buffer prevents unbounded memory growth

- Non-Blocking: Publisher never blocks, events dropped if channel full

- Statistics Tracking: Monitor channel full events to identify slow modules

- Automatic Cleanup: Dead modules automatically removed

Monitoring:

#![allow(unused)]

fn main() {

let stats = event_manager.get_delivery_stats("module_id").await;

if let Some((_, _, channel_full_count)) = stats {

if channel_full_count > 100 {

warn!("Module {} is slow, dropping events", module_id);

}

}

}4. Missing Events During Startup

Problem: Modules that start later miss events from earlier modules.

Solution:

- Hotloaded Module Support: Newly subscribing modules receive all already-loaded modules

- Event Replay: ModuleLoaded events sent to newly subscribing modules

- Consistent State: All modules have consistent view of loaded modules

5. Event Type Coverage

Problem: Not all events have corresponding payloads or are published.

Solution:

- Complete Coverage: All EventType variants have corresponding EventPayload variants

- Governance Events: All governance events are published

- Network Events: All network events are published

- Lifecycle Events: All lifecycle events are published

Event Categories

Core Blockchain Events

NewBlock: Block connected to chainNewTransaction: Transaction in mempoolBlockDisconnected: Block disconnected (reorg)ChainReorg: Chain reorganization

Governance Events

GovernanceProposalCreated: Proposal createdGovernanceProposalVoted: Vote castGovernanceProposalMerged: Proposal mergedGovernanceForkDetected: Fork detected

Network Events

PeerConnected: Peer connectedPeerDisconnected: Peer disconnectedPeerBanned: Peer bannedMessageReceived: Network message receivedBroadcastStarted: Broadcast startedBroadcastCompleted: Broadcast completed

Module Lifecycle Events

ModuleLoaded: Module loaded (after subscription)ModuleUnloaded: Module unloadedModuleCrashed: Module crashedModuleHealthChanged: Health status changed

Maintenance Events

DataMaintenance: Unified cleanup/flush (replaces StorageFlush + DataCleanup)MaintenanceStarted: Maintenance startedMaintenanceCompleted: Maintenance completedHealthCheck: Health check performed

Resource Management Events

DiskSpaceLow: Disk space lowResourceLimitWarning: Resource limit warning

Event Delivery Guarantees

At-Most-Once Delivery

- Events are delivered at most once per subscriber

- If channel is full, event is dropped (not retried)

- If channel is closed, module is removed from subscriptions

Best-Effort Delivery

- Events are delivered on a best-effort basis

- No guaranteed delivery (modules can be slow/dead)

- Statistics track delivery success/failure rates

Ordering Guarantees

- Events are delivered in order per module (single channel)

- No cross-module ordering guarantees

- ModuleLoaded events are ordered: subscription → ModuleLoaded

Error Handling

Channel Full

- Event is dropped with warning

- Module subscription is NOT removed (module is slow, not dead)

- Statistics track channel full count

Channel Closed

- Module subscription is removed

- Statistics track failed delivery count

- Module is automatically cleaned up

Serialization Errors

- Event is dropped with warning

- Module subscription is NOT removed

- Error is logged for debugging

Monitoring and Debugging

Delivery Statistics

#![allow(unused)]

fn main() {

// Get statistics for a module

let stats = event_manager.get_delivery_stats("module_id").await;

// Returns: Option<(successful, failed, channel_full)>

// Get statistics for all modules

let all_stats = event_manager.get_all_delivery_stats().await;

// Returns: HashMap<module_id, (successful, failed, channel_full)>

// Reset statistics (for testing)

event_manager.reset_delivery_stats("module_id").await;

}Event Subscribers

#![allow(unused)]

fn main() {

// Get list of subscribers for an event type

let subscribers = event_manager.get_subscribers(EventType::NewBlock).await;

// Returns: Vec<module_id>

}Best Practices

For Module Developers

- Subscribe Early: Subscribe to events as soon as possible after handshake

- Handle Events Quickly: Keep event handlers fast and non-blocking

- Monitor Statistics: Check delivery statistics to ensure events are received

- Handle ModuleLoaded: Always handle ModuleLoaded to know about other modules

- Graceful Shutdown: Handle NodeShutdown and DataMaintenance (urgency: “high”)

For Node Developers

- Publish Consistently: Publish events at consistent points in the code

- Use EventPublisher: Use EventPublisher for all event publishing

- Monitor Statistics: Monitor delivery statistics to identify slow modules

- Handle Errors: Log warnings for failed event deliveries

- Test Integration: Test event delivery in integration tests

Common Integration Scenarios

Scenario 1: Module Startup

- Module process spawned

- Module connects via IPC

- Module sends Handshake

- Module subscribes to events

- Module receives ModuleLoaded for all already-loaded modules

- ModuleLoaded published for this module (after subscription)

Scenario 2: Hotloaded Module

- Module B loads while Module A is already running

- Module B subscribes to events

- Module B receives ModuleLoaded for Module A

- ModuleLoaded published for Module B

- Module A receives ModuleLoaded for Module B

Scenario 3: Slow Module

- Module receives events slowly

- Event channel fills up (100 events)

- New events are dropped with warning

- Statistics track channel full count

- Module subscription is NOT removed (module is slow, not dead)

Scenario 4: Dead Module

- Module process crashes

- Event channel is closed

- Event delivery fails

- Module subscription is automatically removed

- Statistics track failed delivery count

Scenario 5: Governance Event Flow

- Network receives governance event

- Event published to governance module

- Governance module processes event

- Governance module may publish additional events

- All events delivered via same reliable channel

Configuration

Channel Buffer Size

Currently hardcoded to 100 events per module. Can be made configurable in the future.

Event Statistics

Statistics are kept in memory and reset on node restart. Can be persisted in the future.

Future Improvements

- Configurable Buffer Size: Make channel buffer size configurable per module

- Event Persistence: Persist events for replay after module restart

- Event Filtering: Allow modules to filter events by criteria

- Event Priority: Add priority queue for critical events

- Event Metrics: Add Prometheus metrics for event delivery

- Event Replay: Allow modules to replay missed events

See Also

- Module System - Module system architecture

- Event Consistency - Event timing and consistency guarantees

- Janitorial Events - Maintenance and lifecycle events

- Module IPC Protocol - IPC communication details

Module Event System Consistency

Overview

The module event system is designed to be consistent, minimal, and extensible. All events follow a clear pattern and timing to ensure modules can integrate seamlessly with the node.

Event Timing and Consistency

ModuleLoaded Event

Key Principle: ModuleLoaded events are only published AFTER a module has subscribed (after startup is complete).

Flow:

- Module process is spawned

- Module connects via IPC and sends Handshake

- Module sends

SubscribeEventsrequest - At subscription time:

- Module receives

ModuleLoadedevents for all already-loaded modules (hotloaded modules get existing modules) ModuleLoadedis published for the newly subscribing module (if it’s loaded)

- Module receives

- Module is now fully operational

Why this design?

- Ensures

ModuleLoadedonly happens after module is fully ready (subscribed) - Hotloaded modules automatically receive all existing modules